Enterprise IT requirements are demanding, and solutions are expected to be reliable, scalable, and continuously available. Databases accomplish this through clustering, the ability of several instances to connect and conceptually appear and operate as a single unit.

While Neo4j’s clustering is well documented, for exploration and learning, it can be helpful to get a cluster up and running as quickly as possible. This post demonstrates how to use Docker to have a Neo4j causal cluster up and running in a matter of minutes.

Neo4j causal clustering

Causal clustering, introduced as a cornerstone feature of Neo4j 3.1, enables support for ultra-large clusters and a wide range of cluster topologies. Causal clustering is described by Neo4j as “safer, more intelligent, more scalable and built for the future.”

Docker

Docker is a tool designed to make it easy to create, deploy, and run applications by using containers. Containers allow developers to package an application with all of the components it needs and distribute it as an atomic, universal unit that can run on any host platform.

Docker installation

Before we get started clustering, Docker must be installed on your system, with installation instructions documented here. In a nutshell, you must decide which Docker version is right for you (Community Edition vs Enterprise Edition), download the corresponding version, and install it on your operating system.

While Docker prides itself on being lean and mean, the applications you run may require more memory than the default two gigs Docker is configured for out of the box. This is the case with our Neo4j cluster, and instructions are provided for increasing Docker’s memory on MacOS and Windows. Should you find your cluster failing to start or becoming unavailable when under load, ensure you have increased the amount of memory available to Docker.

Starting the cluster

With Docker installed and its memory adjusted, it’s time to start the five-instance (four core, one read replica) cluster:

- Download the docker-compose.yml

- Open a command shell in the same directory and execute: docker-compose up

That’s it! After allowing each instance to come to life and to discover each other, the cluster is up and running.

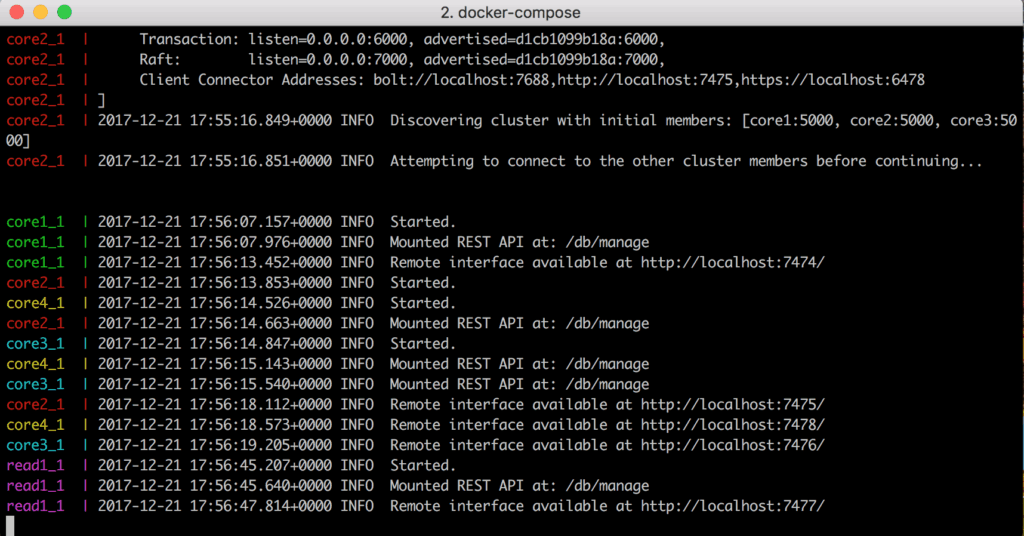

Figure 1 shows the console output of the “docker-compose up” command, including that each instance has started successfully.

Cluster exploration with the Neo4j Browser

The browser-based Neo4j Browser is a common tool for querying, visualization and data interaction with Neo4j. Looking at the console output in Figure 1 or the docker-compose.yml (which we will look at in greater detail in the next section), you can find that the cluster instances are configured for HTTP access on ports 7474, 7475, 7476, 7477 and 7978. Just as you would on a standalone Neo4j, you can use these ports and a web browser to access the Neo4j Browser:

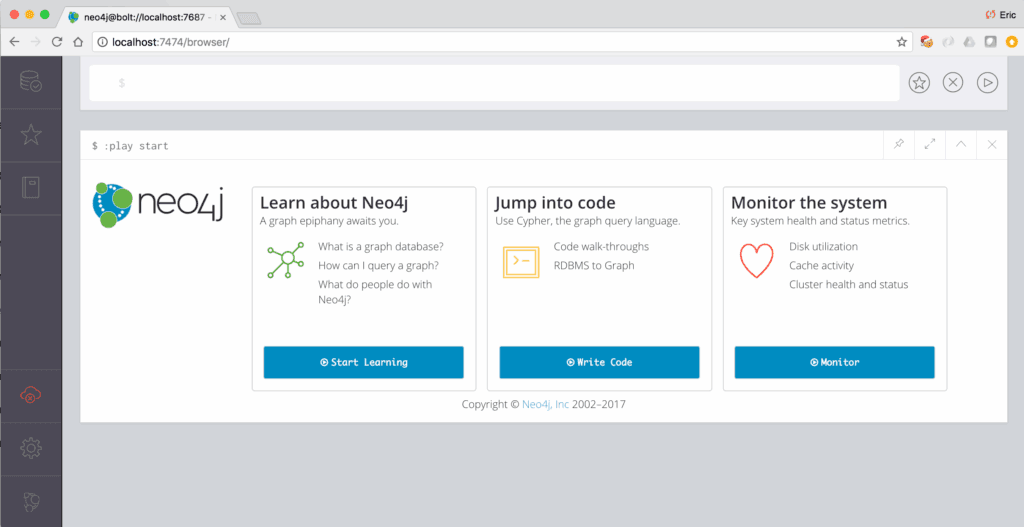

Figure 2 shows using the Neo4j Browser to access the core1 instance on port 7474.

Leader vs non-leader

With some caveats, using a cluster is much like using a standalone Neo4j instance.

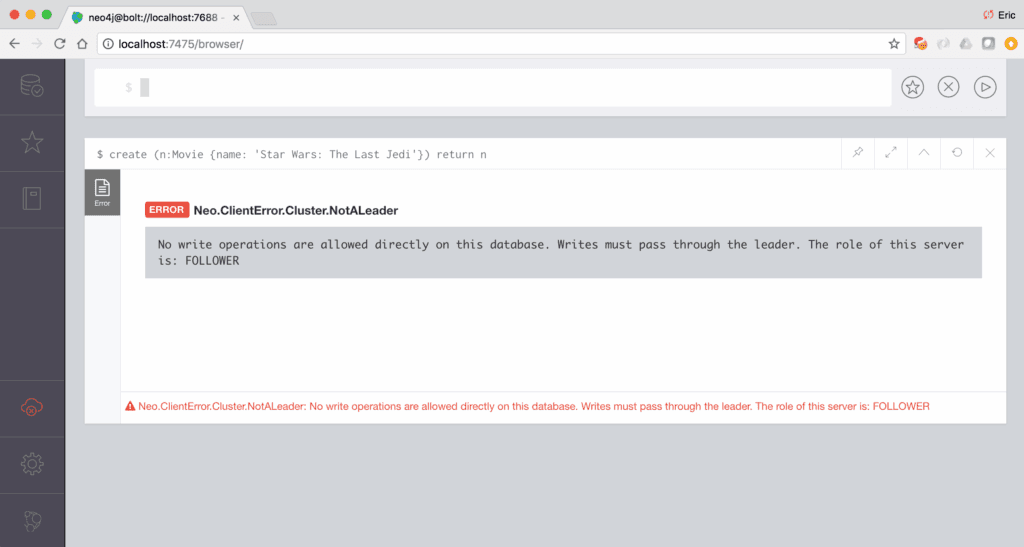

One of these caveats is that all write operations must be served by the instance the cluster considers to be the leader. Should you execute a write operation against a non-leader (ie: follower or read replica) instance, the write will be rejected and an error will result.

Figure 3 shows the error message received when executing a write operation against a non-leader cluster instance.

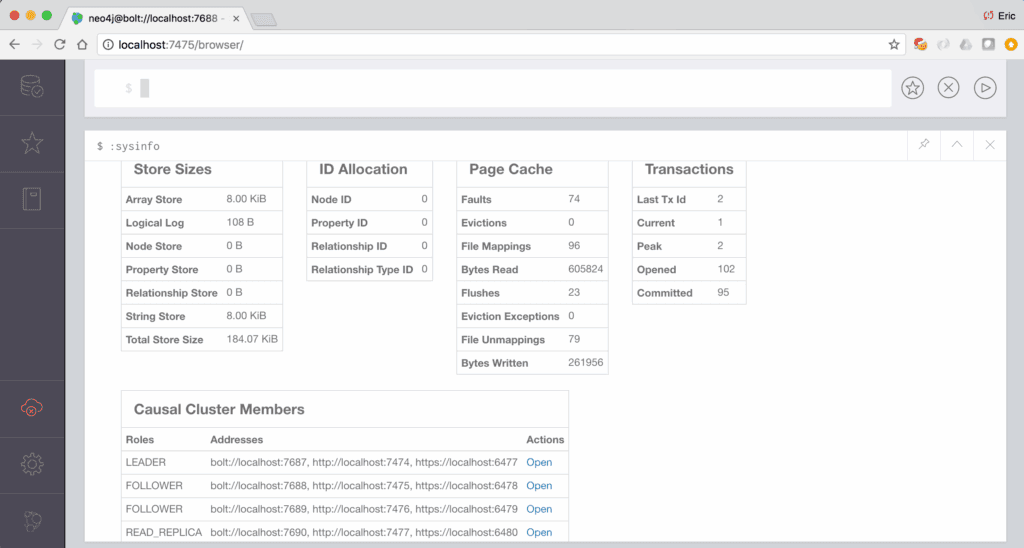

Which instance the cluster considers to be the leader will change over time. So how do you ever know which is the leader? For applications making use of a driver, interacting with the current leader is handled transparently. For our use case, this information can be found in multiple ways, as described in monitoring the cluster documentation, including executing “CALL dbms.cluster.role()”, “CALL dbms.cluster.overview()”, or “:sysinfo” commands within the Neo4j Browser.

Figure 4 shows the output of the :sysinfo command, revealing a “Causal Cluster Members” section that lists all cluster instances, their various ports, and their role. Should you use the Neo4j Browser and attempt a write operation against a non-leader instance, use the information in this table to repoint to the current leader and re-execute your operation.

Application Configuration

An additional caveat when working with a causal cluster is that those using libraries, such as Object Graph Mapping (OGM) or Spring Data Neo4j (SDN), will need to modify the application-side driver configuration to work with the cluster. The documentation describes that the configuration must be updated to take advantage of routing, from:

bolt://<host>:<port>to

bolt+routing://<host>:<port>Beyond configuration, the OGM documentation also offers design considerations that should be baked into the application.

How It Works: docker-compose.yml

The docker-compose.yml, which can be thought of as a recipe instructing Docker how to create and configure containers of Neo4j instances that work together, creates a five-instance cluster with four core (ie, read and write) instances and one read replica (ie, read-only) instance.

While the docker-compose.yml may look complex and dense, there is a single central pattern to understand that is repeated throughout the file. Let’s take a look at this pattern:

core1:

image: neo4j:3.3.1-enterprise

networks:

- lan

ports:

- 7474:7474

- 6477:6477

- 7687:7687

volumes:

- $HOME/neo4j/neo4j-core1/conf:/conf

- $HOME/neo4j/neo4j-core1/data:/data

- $HOME/neo4j/neo4j-core1/logs:/logs

- $HOME/neo4j/neo4j-core1/plugins:/plugins

environment:

- NEO4J_AUTH=neo4j/changeMe

- NEO4J_dbms_mode=CORE

- NEO4J_ACCEPT_LICENSE_AGREEMENT=yes

- NEO4J_causalClustering_expectedCoreClusterSize=3

- NEO4J_causalClustering_initialDiscoveryMembers=core1:5000,core2:5000,core3:5000

- NEO4J_dbms_connector_http_listen__address=:7474

- NEO4J_dbms_connector_https_listen__address=:6477

- NEO4J_dbms_connector_bolt_listen__address=:7687Looking at the above configuration snippet, the file instructs Docker to:

- Create an instance named “core1” using Neo4j Enterprise Edition version 3.3.1

- Create a network named “lan” for which all instances are able to communicate to each other on

- Map ports 7474, 6477, and 7687 on the host machine (ie: your computer) to the same ports on the container

- Map various useful Neo4j directories to your local filesystem, located in $HOME/neo4j and containing a dedicated directory for each instance

- Map various environment variables that are passed to the Neo4j instance, including authentication, cluster configuration, and port numbers. See Neo4j’s Configuration Settings for detailed descriptions of each option.

With an understanding of the core1 instance configuration, when looking at the rest of the docker-compose.yml, you’ll recognise a lot of repetition. In fact, the only difference between core1, core2, and core3 is the ports each instance listens on for connections.

The only instance with a slight twist is read1, which is a read replica (ie: read-only) rather than a core (ie: read and write) instance. This is due to the NEO4J_dbms_mode The property is set to READ_REPLICA, rather than CORE, and demonstrates how easy it is to add read-only followers to a cluster, boosting the cluster’s scalability.

Various Neo4j directories, such as /conf, /data, /logs and /plugins, are mapped to your local filesystem. This allows Neo4j’s data to be persisted beyond Docker stops or restarts and allows you to access and configure each cluster instance just as you would a standalone instance, such as reading logs from $HOME/neo4j/instance-name/logs or deploying plugins to $HOME/neo4j/instance-name/plugins.

Stopping the Cluster

When finished, the cluster can be shut down by opening a shell in the same directory as docker-compose.yml and executing:

docker-compose down

Conclusion

Clustering is a key feature, providing the availability, reliability, scalability, and fault tolerance required by enterprise-grade applications. Neo4j Enterprise Edition offers causal clustering, capable of creating ultra-large clusters and a wide range of cluster topologies, to meet these demands. Using Docker and the provided docker-compose.yml, a Neo4j causal cluster is up and running in a matter of minutes, ready for exploration.